Collaborative Intelligence in Smart and Connected Vehicles

Project Description

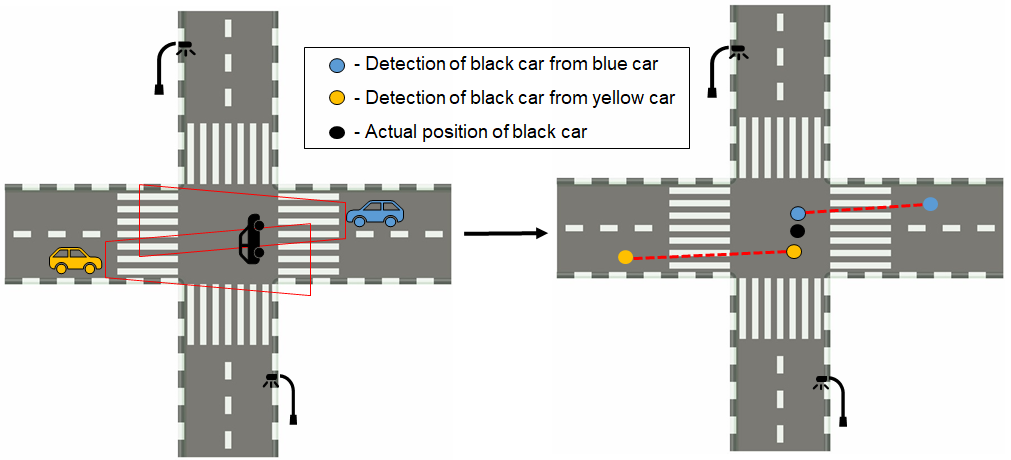

Modern production vehicles are smarter than ever and are loaded with sensors that help the vehicles sense their environment. However, the vehicle’s sensors all have range limitations and can have their accuracy affected by factors such as occlusion and adverse weather conditions. Even with the most sophisticated array of sensors, a single vehicle can never have a perfect perception of its surroundings; there will always be some gaps or areas beyond what its sensors can see. Emerging Vehicle-to-Everything (V2X) technologies promise to improve the perception of streets by enabling data sharing like camera views between multiple vehicles.

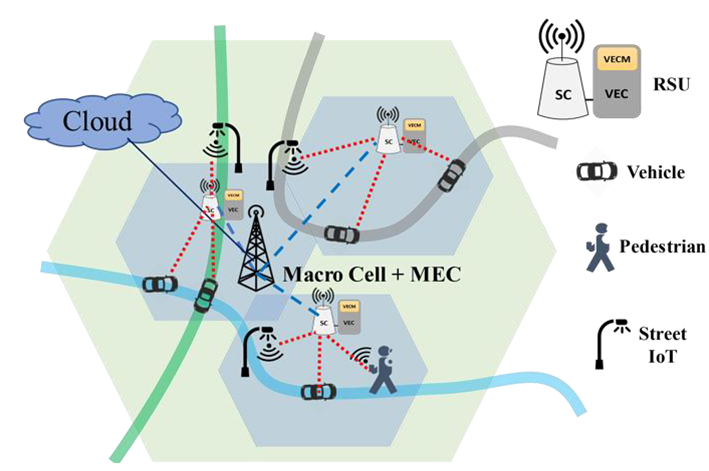

Our projects in the area of collaborative perception focus on improving situational awareness of connected and autonomous vehicles by having multiple vehicles and smart street IoTs (like smart lights and smart intersections) combine their sensor data through a mobile edge computing (MEC) node located at a nearby roadside unit (RSU). We are developing a novel collaborative vision approach, where multiple vehicles (as well as pedestrians and smart street IoTs) share their sensor data at a RSU MEC node, and through multi-modal data fusion and analytics, a constantly updated representation of the environment surrounding the vehicles can be created and used by the vehicles to better recognize the objects in its surroundings. This shared and timely environment representation aims to offer connected and autonomous vehicles a more comprehensive understanding of both close and distant road conditions, thus improving the safety and efficiency of driving. In the first phase of the project, we are using camera, GPS, and IMU sensor data shared by vehicles. Subsequently, we plan to use other types of vehicular sensor data, like radar, lidar, and data from other smart street IoTs.

Project 1: Vehicle Matching with non-overlapping visual features

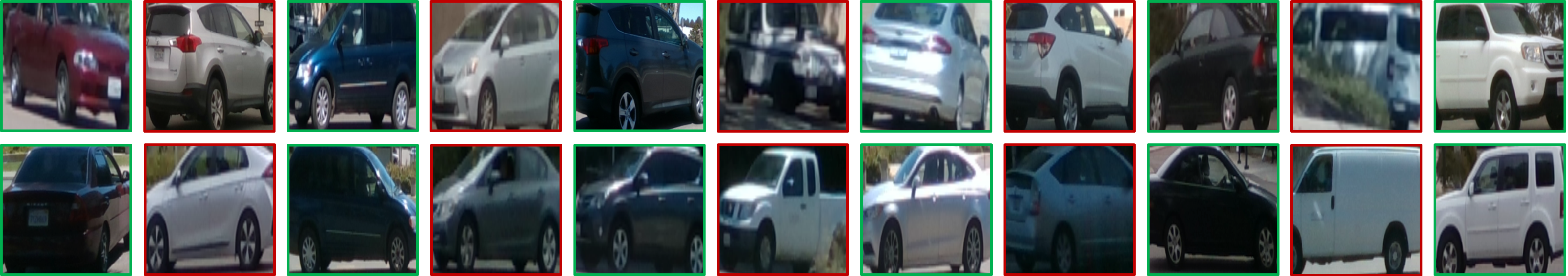

When examining the challenges in creating a complete collaborative perception system, the problem of vehicle matching becomes apparent; the goal of a vehicle matching system is to identify if images of vehicles seen by different cameras correspond to the same vehicle. Such a system is necessary to avoid duplicate detections for a vehicle seen by multiple cameras and to avoid detections being discarded due to a false match being made. One of the most challenging scenarios in vehicle matching is when the camera positions have very large viewpoint differences, as will commonly be the case when the cameras are in geographically separate locations like in vehicles and street infrastructure. In these scenarios, traditional handcrafted features will not be sufficient to create these correspondences due to the lack of common visual features and new methods must be developed.

In this project, we are examining different machine learning based approaches when applied to this scenario of vehicle matching which we have termed non-overlapping vehicle matching (NOVeM). In addition to developing methods for the vehicle matching problem, a dataset is being recorded to test and validate our methods. With the use of multiple cars equipped with stereo cameras and GPS sensors and well as cameras on street infrastructure, we are able to record data to examine both vehicle to vehicle and vehicle to street cameras for cases of overlapping and non-overlapping visual features.

Associated Publications